One evening, I was staring at my screen watching our designer work with Mindy on a design request, and something felt strange.

Mindy is our virtual agent. All we did that day was @ her in Linear and ask her to implement an updated design. A few minutes later, she had finished the changes, committed the code, merged it, deployed to staging, and pinged us to review.

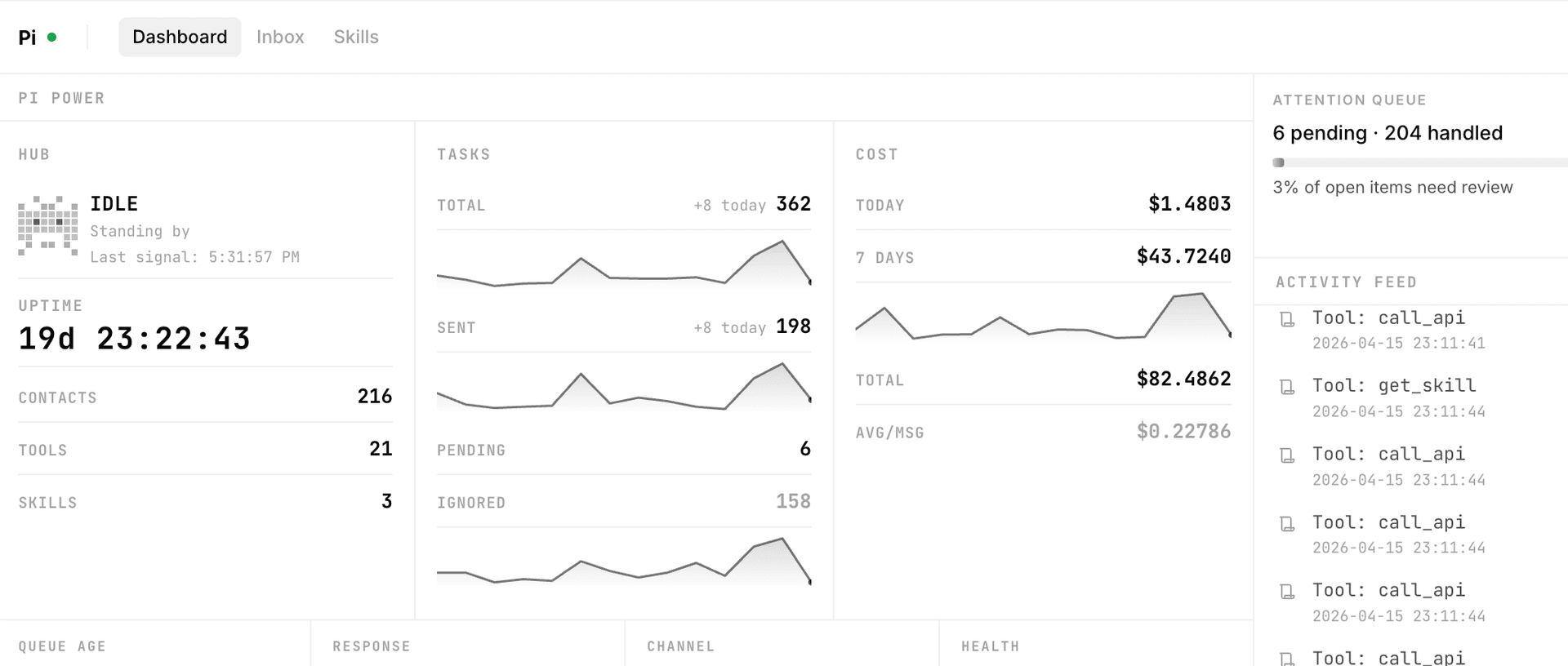

The feeling wasn't excitement. It was closer to vertigo. We're a team of just over twenty people, but at that moment, several agents were running in parallel. Mindy was writing code. Pi was handling customer inquiries. Other agents were doing their own things. And us, the human colleagues, were in a meeting talking about next month's product direction.

That was the first time it truly hit me: YouMind's headcount is no longer just people.

From Single Tasks to Full Workflows

From "help me do one thing" to "run this entire pipeline for me."

I didn't always think this way.

A year ago, we were still operating in the most traditional fashion: product, engineering, design, ops, support. Everyone had a clear role. AI was just a fancy assistant for us: draft some copy, generate a few images, write a bit of code. We used to joke about it, calling ourselves an AI native company while collaborating like it was still 2020.

The shift started with something mundane.

Around January, we were discussing how to get user feedback flowing to us more naturally, with proper context. The first instinct was to buy a CRM. But then I thought: why not build one? In about two days, using Claude Code as the foundation, I wired up email and a few other feedback channels into a dead simple AI native CRM.

It ran stable on my own setup for about a week, and it genuinely solved the email side of customer relationship management with almost no effort.

That experience changed how I saw things. The one person system is becoming real. Going from a need to a fully delivered workflow is no longer theoretical. I didn't need anyone else involved, and yet it covered what used to require a small team.

People Never Wanted Tools. They Wanted Outcomes.

Once I realized I could replace a CRM in two days, I started rethinking a bigger question: if collaboration is changing, what happens to the tools we've always relied on?

The answer is brutal: when outcomes can be delivered directly, tools fade into the background.

This isn't about SaaS being bad. But think about it. Nobody ever wanted a tool. You buy a CRM because you want to close more deals. You use project management software because you want projects shipped on time.

What people want has always been outcomes. SaaS sells you the right to use a tool, charges you monthly per seat, and whether it actually delivers results is your problem. But when AI can deliver results directly, that whole logic falls apart.

Here's what it feels like from my end: buying a system used to mean evaluation, procurement, deployment, training, weeks or even months of setup. Now we spin up an agent tailored to our own business in maybe two days. And it's not some generic tool. It's built specifically for how we work.

So I'm increasingly convinced that SaaS and apps will evolve into agents. The industry giants can see it. Most people just haven't felt it yet.

Some will say: aren't there already out of the box agent platforms? Honestly, my experience is that they feel more like toys. Fine for playing around, but if you need stable output in a real business, you still have to build it yourself. Which actually points to something interesting: building agents for others is going to be a real business.

Building Agents Isn't Hard. Everything Else Is.

When it comes to building your own, most people assume it's difficult. Our engineers were skeptical too. They figured anything vibe coded wouldn't survive production. But once I took an open source agent framework and built our own internal platform with it, the team realized that solutions at this stage are a lot more accessible than expected.

That said, easy to build doesn't mean smooth sailing. After hitting enough walls, I'd say there are three real challenges: correctness, runtime environment, and maintainability.

Correctness is the one that hurts the most. A few days ago I was building a recording app. The AI kept insisting I needed to disable the sandbox to access audio data. It wasn't until I sat down with our engineers that we found a solution that didn't require turning off the sandbox at all, and it turned out Apple had their own demo for it. But the AI didn't know that, and I didn't either. When neither side knows, you can end up spinning your wheels in a dead end. You'll probably solve it eventually, but not elegantly.

AI can do the work, but it doesn't know what "right" looks like. That judgment call, at least for now, still belongs to humans.

As for runtime environment and maintainability: there's no one size fits all answer for environments. File systems, terminals, and web APIs each have their place, and the key is figuring out what kind of workbench your agent actually needs. Maintainability is similar. Design the architecture from day one. Don't wait until you've got a mountain of code before trying to fix things. And if you do need to start over, it's only a couple of hours anyway.

Permissions Make Agents Worse. Principles Make Them Better.

Here's something we learned the hard way, and it might sound counterintuitive: don't restrict your agent with permissions. Guide it with principles.

My first instinct was the traditional approach. This it can do, that it can't. Whitelists, blacklists. I was so worried about things going wrong that I layered on all kinds of guardrails. But what happened was the agent basically degraded into a glorified automation script. The whole point of an agent is that it can make judgment calls in ambiguous situations. If you eliminate all the ambiguity, what's left?

So I changed my approach. Instead of telling it what it couldn't do, I told it what to do when certain situations came up. Think of it like onboarding a new team member. You wouldn't hand them a list of prohibitions. You'd say: when a customer complains, acknowledge their feelings first, log the issue, and if the amount is above a certain threshold, loop me in.

Control becomes guidance.

This design philosophy is exactly why Pi surprised us after launch. One time, we were about to send a follow up email to a user, and Pi jumped in: this user already received the same message on April 1st, sending it again would just be noise. Nobody programmed that rule. But guided by the principles we set, it made that call on its own.

This shift sounds simple, but it demands a lot from you. You need to understand your own business processes deeply: what the critical checkpoints are, where you can tolerate errors, and when a human absolutely needs to step in. A lot of people who say agents don't work well are really saying they haven't thought clearly about how their own business should run.

Humans Own Direction. Agents Own Process.

So when agents can do more and more, where does human value actually lie?

I've thought about this a lot. Skills are being leveled at an incredible pace. You can code, and so can an agent. You can design, and the agent can generate visuals. You can write, and it writes faster. If your value comes solely from being able to do a thing, you're in a vulnerable spot.

But watching how our own team has evolved, I've noticed two things that remain irreplaceable: systems thinking and insight into user needs.

Systems thinking means: can you architect a setup where multiple agents and humans collaborate without stepping on each other? Can you define clear boundaries and coordination patterns? This is the ability to make things happen. Agents don't organize themselves.

Insight into user needs means: can you see what users haven't articulated yet? AI is excellent at executing well defined tasks, but deciding what to build is still a human job. The more precisely you define the goal, the better the agent delivers.

So in the age of AI, what I value most is still the ability to deliver outcomes. The process gets dramatically compressed. That doesn't mean process doesn't matter. It just becomes more predictable. Agents handle execution. Humans define the goals, validate results, and adjust course. Process belongs to the agent. Direction belongs to the human.

The Real Moat: Business Understanding Compounds

One last thing I only recently figured out.

Pi has been running for about a month now, and I've noticed the quality of its responses has gotten noticeably better. Not because the model was upgraded, but because it has accumulated context about our business. It knows what customers ask most often. It knows what tone to use with different types of people. It remembers how much content each user has sent us, their story, their needs, even their offhand complaints. All of that has gradually become its memory.

A former colleague recently asked me where our "knowledge base" comes from. I told them it was built up organically through production. We didn't start with pre written answers.

That made me realize something: the gap between an agent that's been running with you for months and a freshly built generic agent is enormous. That gap isn't about model capability. It's about depth of understanding of your business.

I call it the "understanding moat."

This might be the deepest moat in the agent era. Not a technology moat. Not a feature moat. Whoever understands your users and your business more deeply becomes irreplaceable. And this moat has a beautiful property: it only gets thicker over time. Technical advantages can be matched. Features can be copied. But an agent's accumulated understanding of your business? Someone starting from scratch will need months to catch up.

The Future Small Team: Don't Hire People. Deploy Agents.

Writing all this, I'm not sure every conclusion is right. Technology moves too fast. Half a year from now, some of these ideas might need revision.

But one thing I'm certain of: the "employees plus agents" model is no longer a question of whether for small teams. It's a question of when.

Mindy is still evolving. Sometimes her code isn't elegant. Sometimes she drops the ball during iterations. Pi is still learning too. Every now and then it stumbles when facing complex customer emotions. But they get better every day.

And us, the human colleagues, we're learning a new way of working too. Not doing everything ourselves, but defining direction, setting principles, and then trusting our agents to execute. That trust builds gradually. You start with a small task, observe, verify, feel confident, then hand over something bigger. It's like onboarding a new hire. You wouldn't give them your most important client on day one. But if they keep performing well, you give them more and more room.

The future small team won't hire people. It will deploy agents.

Not because agents are better than humans. But because once you have agents, humans can finally do what only humans can do.